· 1 min read

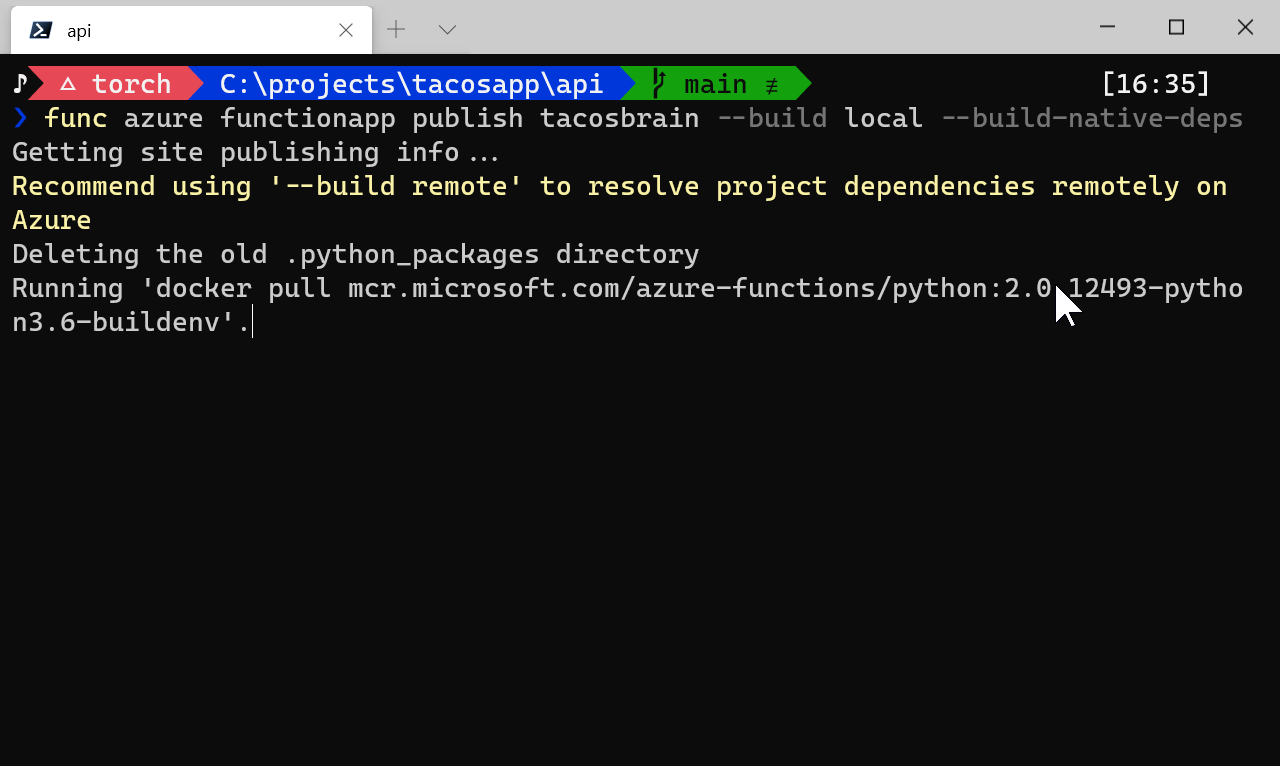

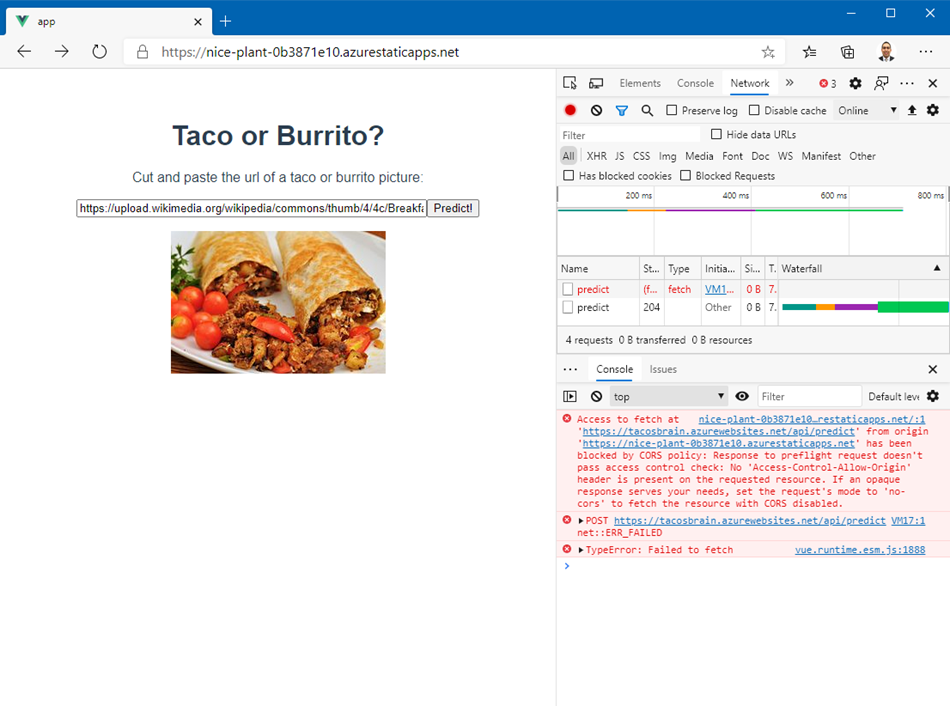

Fixing Mixed-Content and CORS issues at ML Model inference time with Azure Functions

This is a follow-up from the previous post on deploying an ONNX model using Azure Functions. Suppose you've got the API working great and youwant to include this amazing functionality on a brand new website. What follows is the harrowing path…

Machine Learning Azure Functions PyTorch